Introduction to Semantic Kernel (SK)

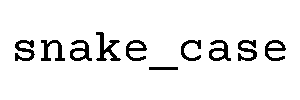

Imagine you’re building a smart assistant that can truly understand and interact with your code, combining the power of traditional programming with the wizardry of Large Language Models (LLMs). That’s exactly where Microsoft’s Semantic Kernel (SK) steps in. It’s an open-source SDK that lets developers blend conventional languages like C# or Python with AI models such as Azure OpenAI or Hugging Face. Think of SK as the perfect bilingual translator that speaks fluent code and AI.

At its core, SK offers an orchestration capability—a fancy word meaning it seamlessly acts as the glue binding your code to AI models. Instead of wrestling with raw HTTP calls, handling tokens, and parsing JSON response fragments, SK abstracts all that messy plumbing so you can focus on what matters: building smarter apps.

For .NET developers, the benefits are crystal clear. SK provides memory management to help your AI-powered app remember context, manages connections to various AI services via built-in connectors, and offers a structured way to organize your prompts and logic. So rather than writing brittle calls to APIs, you get a robust, maintainable, and scalable approach out of the box.

Setting Up the Development Environment

Ready to dive in? First step: create a fresh C# Console Application. Fire up your favorite IDE—Visual Studio or VS Code will do—and spin up a new project named something fun, like SemanticKernelDemo.

Next, grab the essential NuGet packages. You’ll need:

Microsoft.SemanticKernelMicrosoft.SemanticKernel.Connectors.OpenAI

Install these via your package manager console or through the NuGet UI. These packages give you the kernel’s engine and the necessary connectors to hook into OpenAI or Azure OpenAI models.

Now, let’s initialize the kernel using the Kernel Builder pattern. This approach centralizes your AI service configurations and creates the global kernel object your app will use to orchestrate actions.

// Initialize Semantic Kernel with Azure OpenAI Text Completion Service

// Replace the placeholder values with your actual Azure OpenAI credentials

using Microsoft.SemanticKernel;

var kernel = Kernel.CreateBuilder()

.AddAzureOpenAITextGeneration(

deploymentName: "your-deployment-name",

endpoint: "https://your-resource-name.openai.azure.com/",

apiKey: "your-azure-openai-api-key")

.Build();

// For standard OpenAI API, use AddOpenAITextGeneration instead:

// .AddOpenAITextGeneration(modelId: "gpt-3.5-turbo", apiKey: "your-openai-api-key")The above snippet configures the kernel to use an Azure OpenAI instance. Swap to WithOpenAITextCompletionService if you prefer the standard OpenAI API. Just supply your deployment name, endpoint, and API key, and you’re off to the races.

Understanding & Creating Semantic Functions

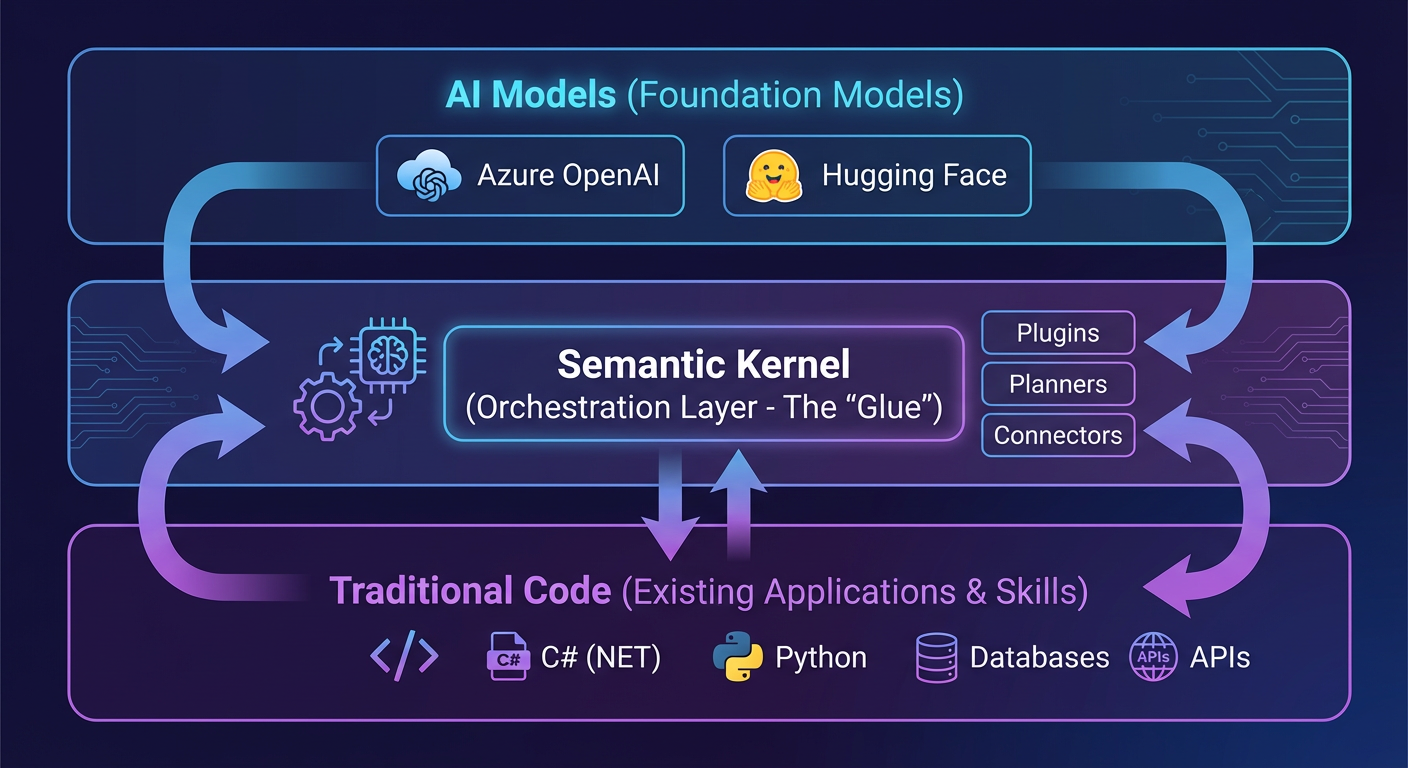

Semantic Functions are where the magic of prompting meets function calls. Think of them as little AI-powered subroutines triggered by natural language prompts. Instead of a plain method doing math, it’s a prompt like “Summarize this text” or “Generate a creative tweet” that the AI interprets and runs.

You can manage these prompts in one of two ways. The first involves organizing your prompts in files named skprompt.txt and related JSON configuration like config.json—kind of like a prompt library on disk. The second, and often quicker, method is inline registration. You simply define the prompt as a string in your code, insert variables with placeholders like {{$input}}, and register it as a semantic function.

Here’s a simple example of inline prompt registration:

// Create an inline semantic function for English to French translation

// The {{$input}} placeholder will be replaced with the actual text to translate

using Microsoft.SemanticKernel;

string prompt = "Translate the following English text to French:\n{{$input}}";

// Create the semantic function from the prompt template

var translateFunction = kernel.CreateFunctionFromPrompt(prompt, functionName: "translateEngToFr");

// Invoke the function with input text

var result = await kernel.InvokeAsync(translateFunction, new KernelArguments

{

["input"] = "Hello, how are you?"

});

Console.WriteLine(result.GetValue<string>());

// Output: Bonjour, comment allez-vous?This snippet sets up a semantic function named translateEngToFr that takes user input and asks the AI to translate it. When you invoke this function with “Hello, how are you?” the AI returns the French version. Easy, right?

Implementing Native Functions

Now that we have AI functions, let’s bring in some regular old C# code. Native Functions are your traditional methods or classes that perform tasks like math calculations, API calls, or file operations. What makes them special in SK is that you can tag these functions with [KernelFunction] and [Description] attributes. This helps the AI understand what your native code does, allowing it to cleverly decide when to call them within more complex workflows.

Here’s a simple example: a calculator class that adds two numbers.

// Define a native function plugin with KernelFunction attribute

// The Description attribute helps the AI understand when to use this function

using Microsoft.SemanticKernel;

using System.ComponentModel;

public class Calculator

{

[KernelFunction]

[Description("Adds two integers and returns the sum.")]

public int Add(int a, int b)

{

return a + b;

}

}

// Register the plugin with the kernel

var calculator = new Calculator();

kernel.ImportPluginFromObject(calculator, "Calculator");By importing the Calculator plugin into your kernel, you make its Add method available for invocation. Later, your AI-driven pipelines can call these methods as “native functions” when they need precise deterministic operations.

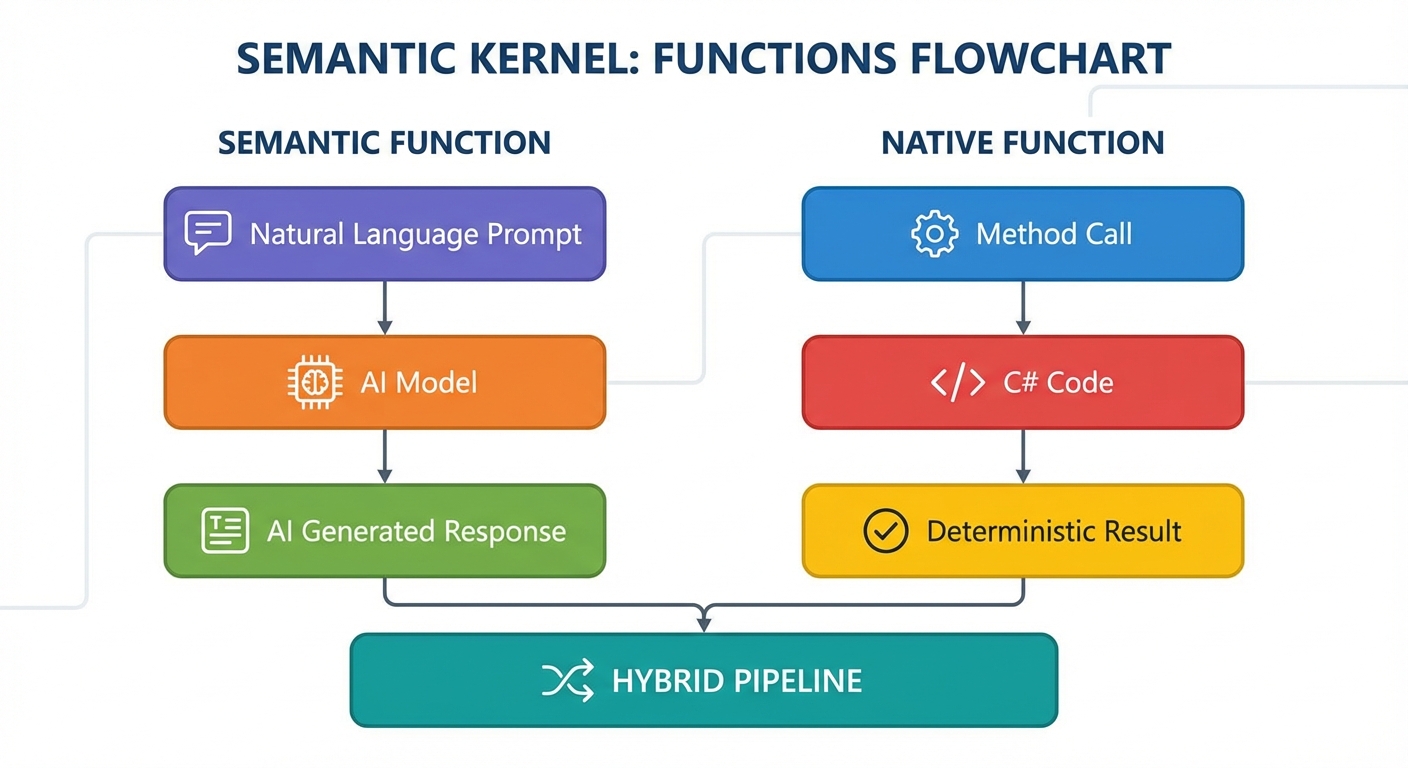

Invoking Your First Hybrid Pipeline

Alright, now for the fun part: combining the two! Hybrid pipelines let you chain semantic and native functions—mixing the genius of AI-generated responses with the reliability of your own code.

Imagine you want to receive a prompt, have the AI summarize it, then apply a native function that modifies the text further, like making it uppercase.

Here’s how you stitch that together:

// Hybrid Pipeline: Combining Semantic and Native Functions

// Step 1: AI summarizes text, Step 2: Native function converts to uppercase

using Microsoft.SemanticKernel;

using System.ComponentModel;

// Step 1: Create the semantic function to summarize text

string summarizePrompt = "Summarize this text briefly:\n{{$input}}";

var summarize = kernel.CreateFunctionFromPrompt(summarizePrompt, functionName: "summarizeText");

// Step 2: Define a native function plugin for text processing

public class TextProcessor

{

[KernelFunction]

[Description("Converts text to uppercase.")]

public string ToUpper(string input) => input.ToUpper();

}

var textProcessor = new TextProcessor();

kernel.ImportPluginFromObject(textProcessor, "TextProcessor");

// Step 3: Invoke the pipeline manually - chain the functions

var initialInput = "Microsoft Semantic Kernel makes AI integration simple!";

// First, get the AI summary

var summaryResult = await kernel.InvokeAsync(summarize, new KernelArguments

{

["input"] = initialInput

});

// Then, apply the native uppercase function to the summary

var upperResult = textProcessor.ToUpper(summaryResult.GetValue<string>());

Console.WriteLine("Final output: " + upperResult);The output on your console will be the AI’s summarized text turned into uppercase by your native function. This chaining showcases the best of both worlds: AI handling creative and contextual tasks, and native code handling deterministic transformations.

Semantic Kernel in .NET opens a fun new frontier in developer productivity, transforming how code and AI can collaborate. From managing the environment to building semantic and native functions, you now hold the key foundation to unleash hybrid AI-powered applications.

For a deeper dive and the latest on Semantic Kernel, check out the official Microsoft video walkthrough here.